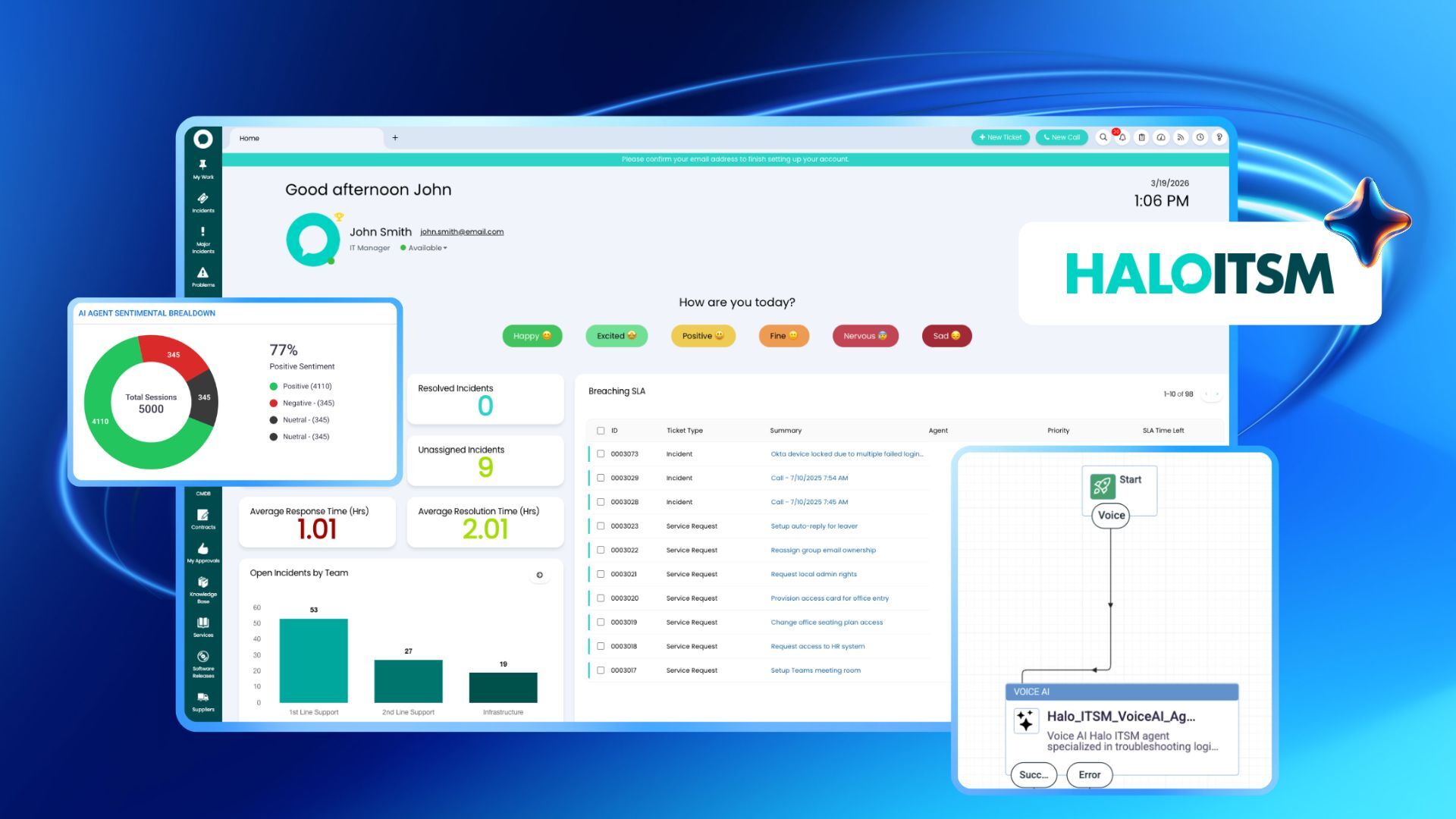

The promise of Voice AI in the enterprise has shifted from "automated routing" to "autonomous resolution." For leadership in CX, HR, and IT, the goal is no longer just to answer the phone but rather to resolve complex inquiries with the same precision and empathy as a human agent. However, as organizations integrate Large Language Models (LLMs) and agentic workflows into their contact centers, a critical bottleneck has emerged: the knowledge base.

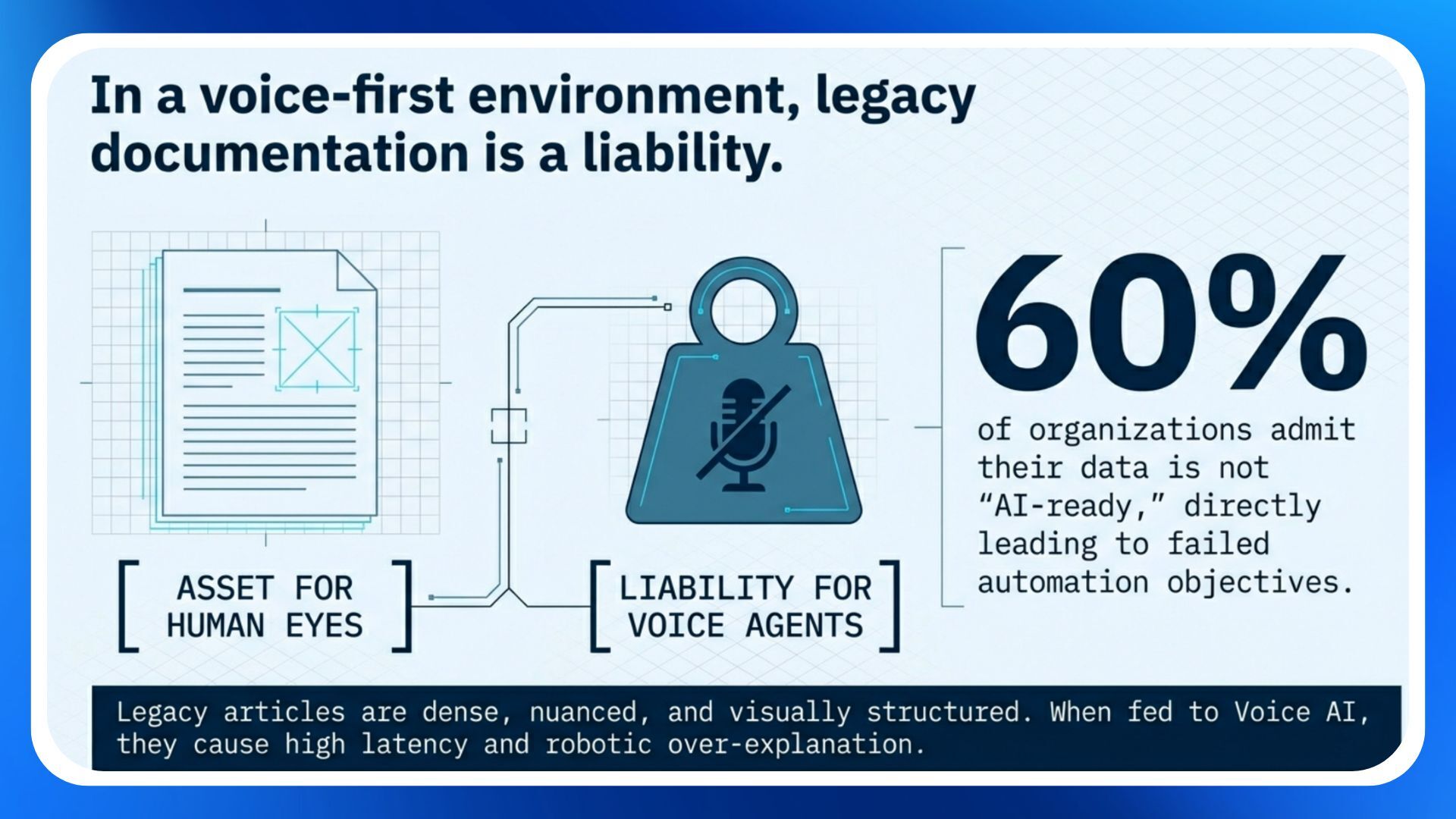

Most legacy knowledge articles were written for human eyes; dense, nuanced, and visually structured. When fed into a Voice AI agent, this "human-first" content often leads to high latency, "robotic" over-explanation, or worse, hallucinated inaccuracies. To achieve enterprise-grade performance, leaders must pivot from traditional Knowledge Management (KM) to Knowledge Engineering.

The voice-first knowledge gap: why traditional articles fail

In a digital-first world, a 1,500-word "How-To" guide with screenshots is an asset. In a voice-first world, it is a liability. According to research, nearly 60% of organizations admit their data is not "AI-ready," leading to failed automation objectives.

60% of organizations admit their data is not "AI-ready," leading to failed automation objectives.

Voice AI agents interact with knowledge differently than humans or even text-based chatbots. They rely on Retrieval-Augmented Generation (RAG) to fetch information in real-time. If that information is buried in a PDF or article, the agent must process too many tokens, which increases latency, the "dead air" on a call that destroys customer trust.

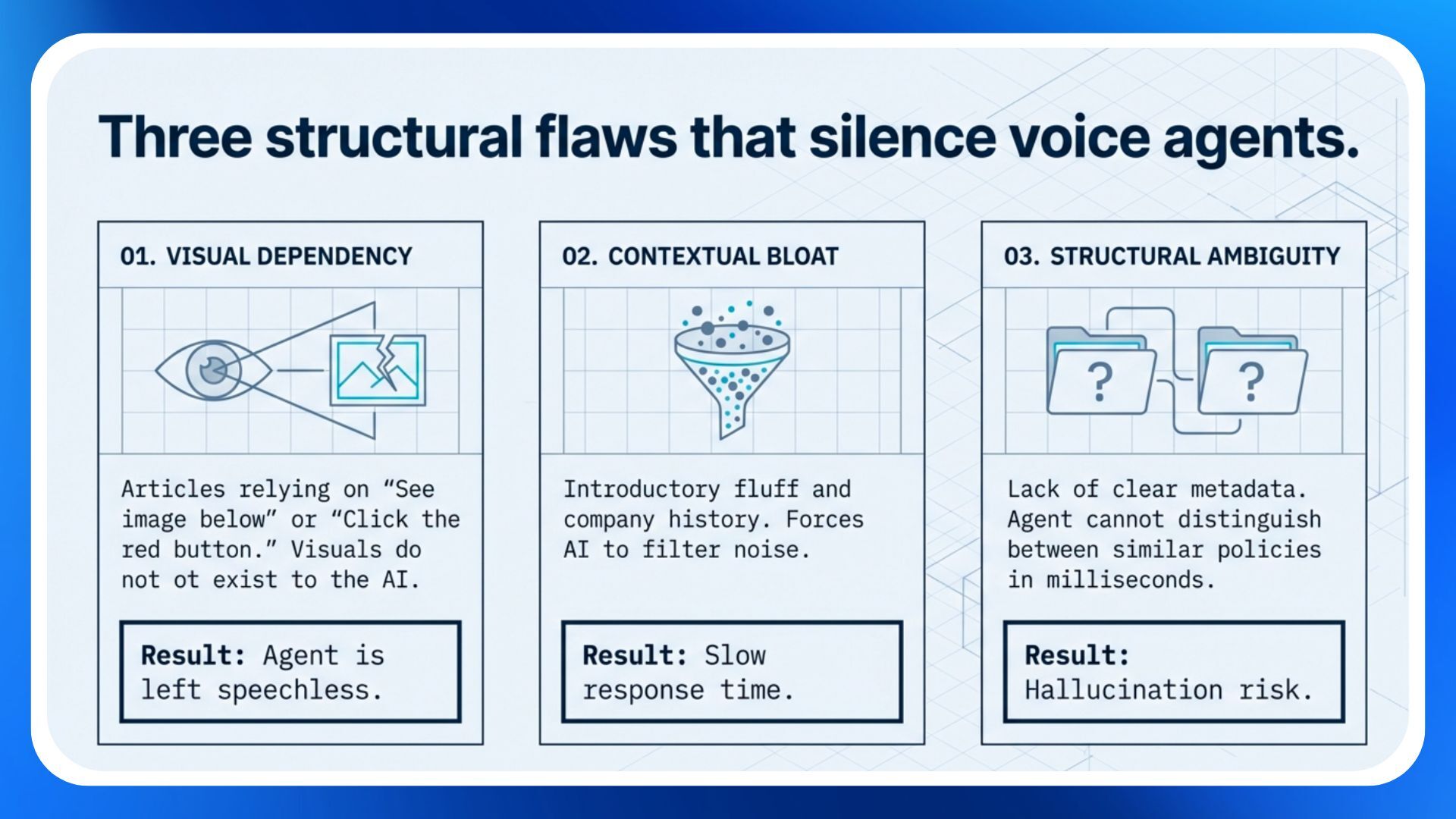

Key Hurdles in Legacy Knowledge Sets:

- Visual dependency: articles that rely on "See image below" or "Click the red button" leave voice agents speechless.

- Contextual bloat: introductory "fluff" or company history in an article forces the AI to filter out noise, slowing down the response time.

- Structural ambiguity: lack of clear metadata makes it difficult for the agent to distinguish between a "California Policy" and a "Texas Policy" in a split second.

Key Hurdles in Legacy Knowledge Sets

Best practices for optimizing knowledge for Voice AI

To optimize your system of record – whether it’s an IT, HR, or CRM platform – for voice, the following methodology will come in handy:

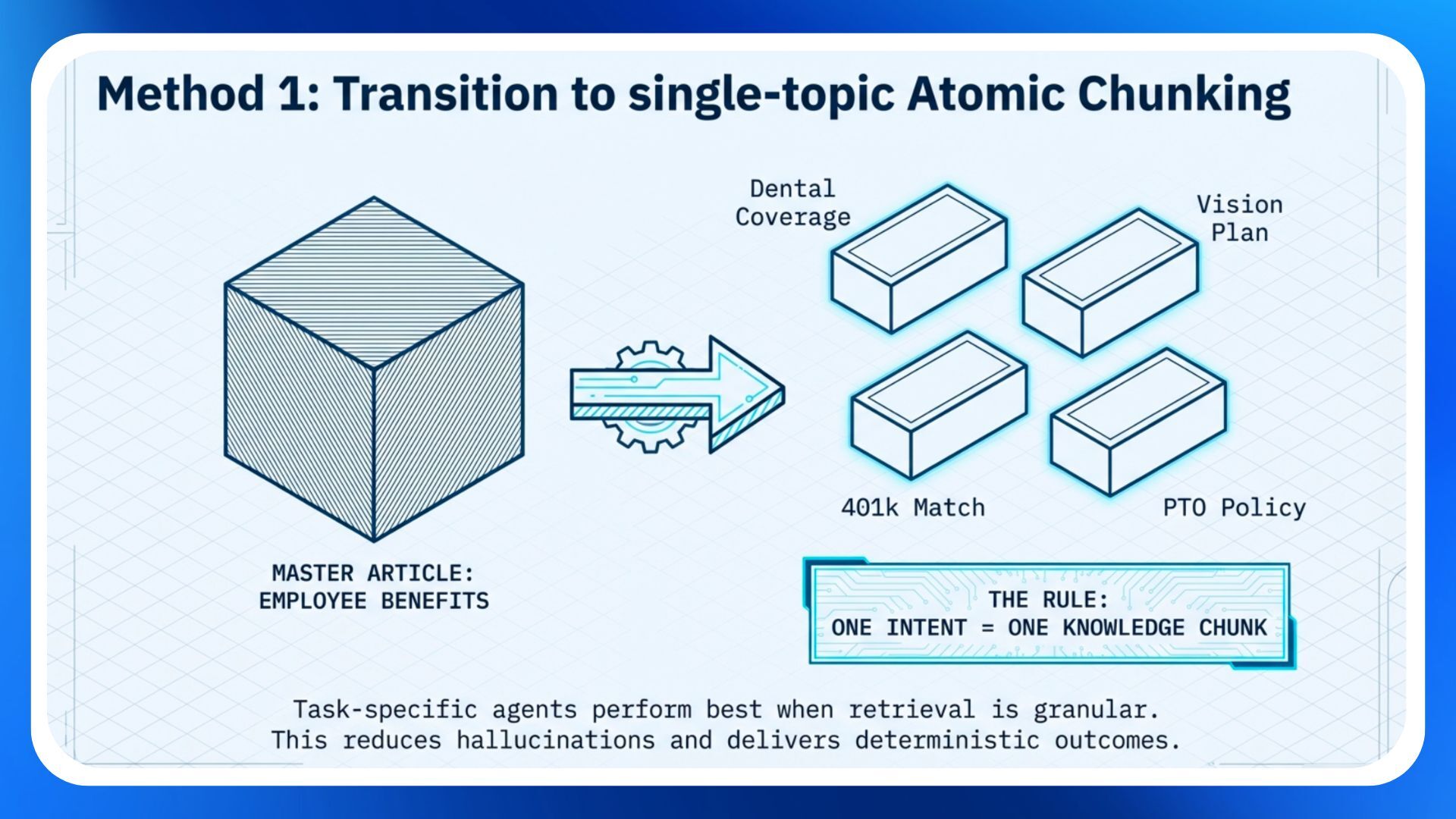

1. Transition to "single-topic" atomic chunking

Instead of one master article for "Employee Benefits," break the content into atomic, single-topic chunks as "task-specific" agents perform best when retrieval is granular.

- The rule: one intent = one knowledge chunk.

- The benefit: reduces the opportunity for hallucinations to deliver more deterministic outcomes

Transition to "single-topic" atomic chunking

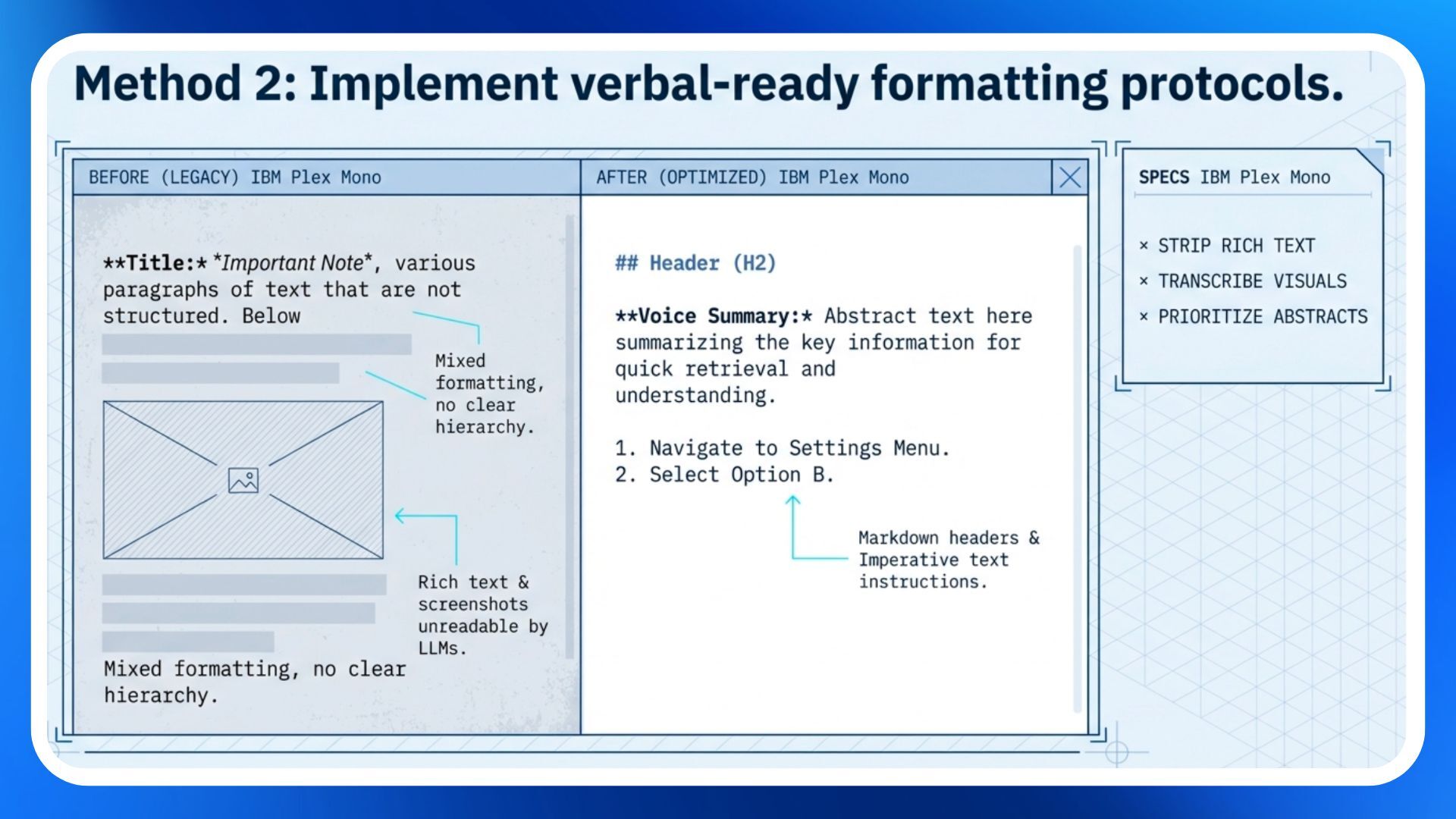

2. Implement "verbal-ready" formatting

Voice AI needs to "read" the article to "speak" the answer. This requires stripping away complex rich-text formatting that LLMs struggle to parse.

- Use markdown: use simple H2 and H3 headers to establish hierarchy.

- Remove non-text elements: if a process is only explained in a screenshot, it doesn't exist to the Voice AI. Transcribe every visual step into clear, imperative text (e.g., "Tell the user to navigate to the 'Settings' menu", etc.).

- Prioritize the abstract: place a "Voice Summary" or "Abstract" at the very top of every article. High-performance integrations prioritize these abstracts for "Genius Results" in voice interactions.

- Tagging: tag the articles with relevant keywords to improve searchability.

- Use consistent formatting across the knowledge base: How to Articles (Introduction - Instructions), FAQs (question-and-answer) are some of the common formats.

Implement "verbal-ready" formatting

3. Cleanse the "system of record" data

A voice agent is only as good as the data it can access. For many enterprises, the issue isn't the knowledge article, but the System of Record (SoR) data it relies on for personalization.

- Standardize formats: validate phone numbers are in E.164 format and names are consistent. If a caller asks about their "Macbook" but the SoR only lists "Hardware Asset #492," the AI may fail to connect the dots.

Strategic governance

As enterprise applications evolve, Gartner predicts approximately 40% will feature task-specific AI agents this year, a shift that is fundamentally transforming the role of Knowledge Manager into that of an “AI Content Designer”. This new era of strategic governance necessitates a "human-in-the-loop" approach, ideally managed by a cross-functional AI Council which leverages the VP of CX to define persona and tone, the CHRO to ensure policy accuracy, and IT Leadership to maintain integration security. To sustain high performance, organizations will need to implement a continuous improvement process centered on three pillars:

- Red teaming to stress-test retrieval through complex or mumbled queries

- Latency monitoring to ensure responses remain optimal indexing

- Gap analysis to turn "No Result" queries into a concrete roadmap for future knowledge creation.

The business outcome: ROI beyond containment

Optimizing knowledge for voice isn't just about "containing" calls, it’s also about Total Cost of Ownership (TCO) and Brand Equity. When an AI agent can accurately resolve a 401(k) inquiry or a password reset on the first attempt because it found the exact knowledge chunk it needed, the business realizes a number of benefits including:

- Reduced Average Handle Time (AHT): no more "Let me look that up for you" pauses.

- Higher First Contact Resolution (FCR): accuracy driven by grounded, high-quality data.

- Improved Employee Sentiment (ESAT): human agents are liberated from addressing "High-Volume, Low-Complexity" (HVLC) tasks to focus on high-value empathy work.

Conclusion: start with the foundation

The most advanced Voice AI in the world will fail if it is grounded in a messy, visual-heavy, and outdated knowledge base. For enterprise leaders, the path forward is clear: treat your knowledge base as a structured data asset, not a digital library.

Next Steps for Leadership:

- Audit: identify your top 10 most frequent voice inquiries.

- Refactor: rewrite the corresponding 10 knowledge articles following the methodology above.

Pilot: measure the latency and accuracy of a Voice AI agent using these optimized articles versus your legacy set. Refine and then deploy.